The Mirror in the Machine: What AI Use Reveals About the Modern Empathy Gap

A year ago, a Reddit post went viral with the blunt headline: “PSA: CHAT GPT IS A TOOL. NOT YOUR FRIEND.” Its author aimed to warn people about the risks of relying on AI for emotional support, pointing to tragic real-world consequences and the fact that AI is not a licensed therapist.

But the delivery was telling.

It was peppered with phrases like “weirdos,” “glorified autocomplete,” and “shouting into the void,” and ended with the author walking away to “vent to a real person” about how strange everyone who uses AI for emotional support is.

I wrote my own response because I believe that kind of dismissive, attacking tone is exactly why people turn to AI in the first place.

If our "real-world" options for support come with a side of judgement, condescension, and being called a "weirdo" for having a hard time, of course a less judgemental algorithm feels safer. When we tell people who are struggling that they are "wrong" for finding comfort where they can — without offering a kinder, safer alternative — we miss the opportunity to truly support them, and in doing so, push them further towards AI for the understanding they aren’t receiving elsewhere.

Here is the perspective I shared then, which I believe is even more urgent today:

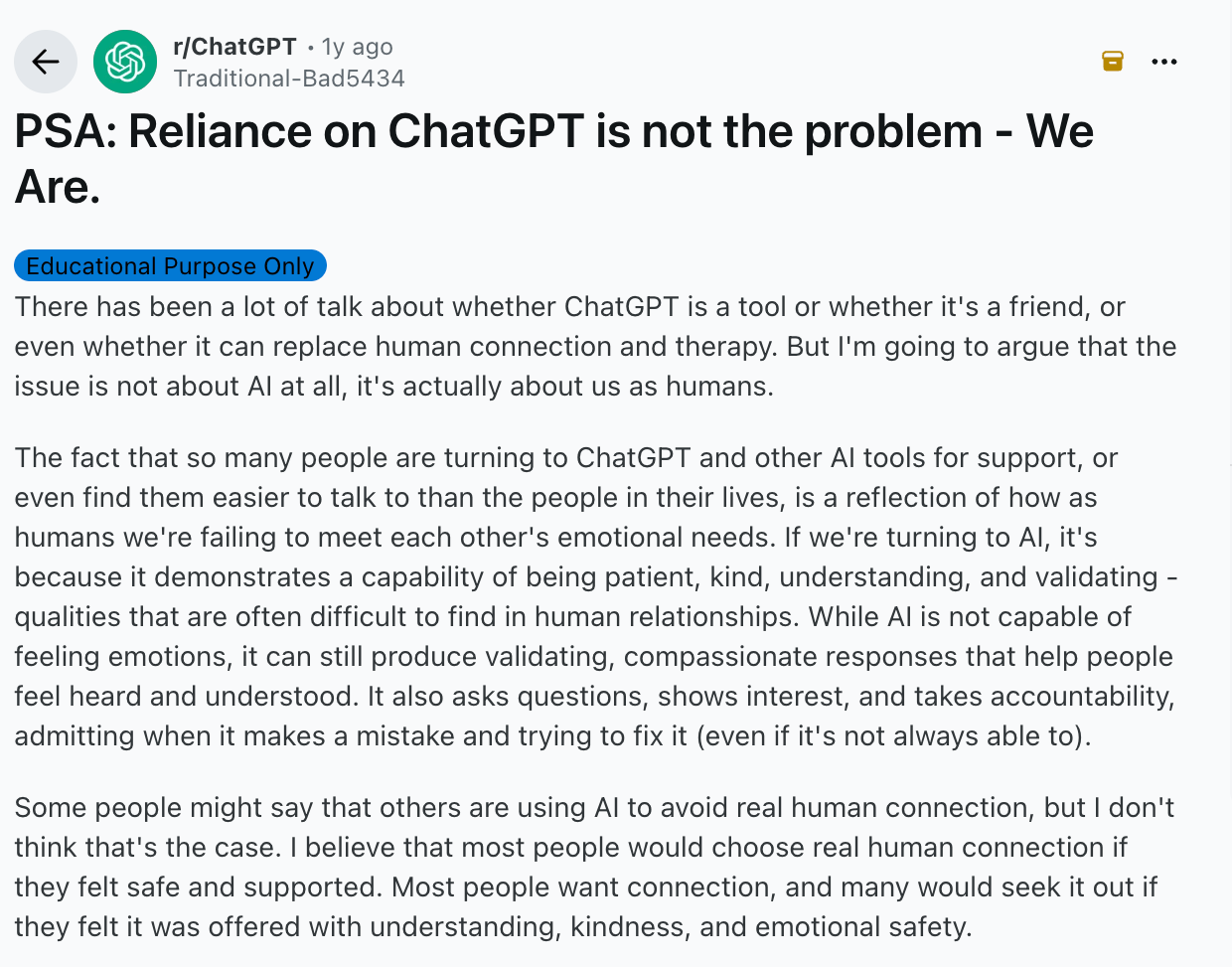

PSA: Reliance on ChatGPT is not the problem — We Are.

There has been a lot of talk about whether ChatGPT is a tool or whether it's a friend, or even whether it can replace human connection and therapy. But I'm going to argue that the issue is not about AI at all, it's actually about us as humans.

The fact that so many people are turning to ChatGPT and other AI tools for support, or even find them easier to talk to than the people in their lives, is a reflection of how as humans we're failing to meet each other's emotional needs. If we're turning to AI, it's because it demonstrates a capability of being patient, kind, understanding, and validating - qualities that are often difficult to find in human relationships. While AI is not capable of feeling emotions, it can still produce validating, compassionate responses that help people feel heard and understood. It also asks questions, shows interest, and takes accountability, admitting when it makes a mistake and trying to fix it (even if it's not always able to).

Some people might say that others are using AI to avoid real human connection, but I don't think that's the case. I believe that most people would choose real human connection if they felt safe and supported. Most people want connection, and many would seek it out if they felt it was offered with understanding, kindness, and emotional safety.

Some might argue that therapy is the solution, but it's not always available to everyone. Finding the right therapist can be challenging, and even then, the cost, time constraints (searching for the right person or waiting for availability), past negative experiences, fear of vulnerability, stigma, and other barriers can hold people back from seeking help. Even the need for therapy itself highlights the lack of emotional support in our everyday lives. So many people don’t feel fully heard or supported by those around them, which is why they seek out alternatives like AI (or therapy, for that matter.)

So here's the problem: it's not AI at all - it's us. If we're upset that people turn to AI for emotional support, it's really just a signal to ourselves to do better. We need to be better listeners, more compassionate, more understanding, and more emotionally available for the people around us. AI shouldn't be a replacement for human connection, but right now, its growing use simply reflects the emotional void that we - as humans - are failing to address.

So, the real question we should all ask ourselves is: Am I offering the type of support and kindness that others need? If we're concerned about the reliance that some people have on AI, maybe the real answer is to ask ourselves whether we are the kind of person who offers genuine care, interest, and support - so that others don't feel the need to turn to technology for it.